As we know, many

Web 2.0 implementations require some new tricks from our analytics packages. As

Eric P and others have written, the old-guard measure of "hit" (or something like it) is possibly making a comeback as we ponder how to measure (or what to measure) in this more dynamic world we are in now.

Enter AjaxOne of the more interesting Web 2.0 application types to tackle is

Ajax implementations. Built on the idea that you don't have to refresh the entire page to present updated information to the visitor, Ajax is really gaining some steam in the industry. And rightfully so...it's really quite powerful, very efficient, and is a great example of a "rich" user experience. The basic idea is that instead of a link rendering a new page request, a link only fetches a smaller amount of content that is displayed back to the visitor quickly. To confuse things a bit, this content can come in a variety of formats (XML, HTML, JSON, etc.), and can be requested a number of different ways (XMLHttpRequest, IFrame, etc.). From an analytics perspective, it doesn't really matter. What's important is that the visitor has taken some action, and we likely want to know what the action was (and sometimes we want to know what was inside the response too...I'll get to that in another post).

A major issue we face then is how to treat pages vs. the (many) potential requests that follow on the page. These secondary (and subsequent) requests are not pageviews in the traditional sense. So, what do we call these non-pageviews? What do we track, and how? Of course, it depends on your needs, but let's dive into an example to see how others are thinking about it.

Example: MicrosoftOne of the more important properties on the internet is working on a new soon-to-be-released Ajax facelift. The very smart folks over at Microsoft have a major redesign in the works that is very impressive, and a offer us very heavy example of Ajax from which to learn. If you go to the current

Microsoft Homepage, you'll see an example of Ajax in the tab interface about 2/3rds of the page down (in the center). If you hover over the "Latest releases" menu heading, you'll see some new text and a new image appear. The images are dynamically returned back to the browser in an Ajax call. Side note: if you stay hovered over one of the menu headings for a second, a tracking hit is sent...but only one time per menu item to avoid overkill on mouse hover tracking.

However, if you want to find out more about Windows Vista, you might click on the upper left navigation "Windows" link, then click "Windows Vista". Three pageviews altogether.

Now try this. Go to the new Microsoft "

preview" site, which features some new Ajax look-and-feel. Now if you want to find out about Windows Vista, you might use the "Microsoft Site Guide" menu on the upper right, selecting "Products & Related Technologies". This makes an Ajax request, but instead of refreshing the page, presents you with a new pop-up window over the top of the page. Now select "Windows", and the window is updated with more dynamic information. Now select "Windows Vista" and you are sent to a new page. Two pageviews with two extra requests.

You could argue that the pop-up menu looks like a page, and you might want to track it as a pageview. But you can also suggest that since you were still technically on the homepage, it should be tracked as a separate type of request. For sure the third click, which simply rendered new data in the menu was different than a full page request.

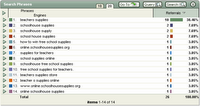

Tracking Ajax RequestsIn order to track these requests differently, we have defined different event "types" via a new parameter. In this case, the parameter is WT.dl, with values to describe a pageview or an ajax request (or a mouseover, or an RSS feed view, or a "start" of a video...you get the idea). Within the analytics tool (WebTrends - no great surprise there ;-), we simply leverage the powerful analytics reporting (or new Marketing Lab Warehouse) to track these events independently (or together if needed). Pageviews remain as pageviews. Other requests are defined appropriately and tracked as needed to provide accurate reporting.

This type of tracking leverages the client to send the request to the analytics tool (I'll call it Asynchronous Client Tracking of AJAX, or ACTAjax - ok, this is why I'm not in Marketing :-) There are other methods for collecting data though that might become necessary when you don't want the client to send the data (security, privacy or the scenario just doesn't allow it), or when the client isn't a traditional browser (tracking API requests, RSS feeds, some mobile devices, etc.). For these reasons, you may also want to have other collection strategies ready (server-side requests, traditional logfile analysis, etc.). I'll talk more about these types of requests in a subsequent post.

More InfoAn excellent

team of speakers is putting together a series of great presentations for our upcoming

Marketing Performance In Action conference in Orlando (Oct 24-25). Our own

Clay Moore will be speaking with

Brant Barton (co-founder and VP of Business Development at Bazaarvoice) will be speaking about tracking Web 2.0 technologies (

Optimizing Your Web 2.0 Programs with WebTrends) in their session on Wednesday.

I'll be at the MPIA conference as well - if you're going to be there, please drop me a line (elbpdx @ gmail) and let's be sure to connect!

Otherwise, if you have any best practices, or other excellent ideas...feel free to leave a comment here!

Filed in:

analytics,

ajaxTechnorati Tags:

analytics,

ajaxpowered by performancing firefox